I’ve noted this in the past, but it feels like we’ve learned nothing from the negative impacts caused by the rise of social media, and we’re now set to replicate the same mistakes again in the roll-out of generative AI.

Because while generative AI has the capacity to provide a range of benefits, in a range of ways, there are also potential negative implications of increasing our reliance on digital characters for relationships, advice, companionship, and more.

And yet, big tech companies are racing ahead, eager to win out in the AI race, no matter the potential cost.

Or more likely, it’s without consideration of the impacts. Because they haven’t happened yet, and until they do, we can plausibly assume that everything’s going to be fine. Which, again, is what happened with social media, with Facebook, for example, able to “move fast and break things” till a decade later, when its execs were being hauled before congress to explain the negative impacts of its systems on people’s mental health.

This concern came up for me again this week when I saw this post from my friend Lia Haberman:

Amid Meta’s push to get more people using its generative AI tools, it’s now seemingly prompting users to chat with its custom AI bots, including “gay bestie” and “therapist”.

I’m not sure that entrusting your mental health to an unpredictable AI bot is a safe way to go, and Meta actively promoting such in-stream seems like a significant risk, especially considering Meta’s massive audience reach.

But again, Meta’s super keen to get people interacting with its AI tools, for any reason:

I’m not sure why people would be keen to generate fake images of themselves like this, but Meta’s investing its billions of users to use its generative AI processes, with Meta CEO Mark Zuckerberg seemingly convinced that this will be the next phase of social media interaction.

Indeed, in a recent interview, Zuckerberg explained that:

“Every part of what we do is going to get changed in some way [by AI]. [For example] feeds are going to go from - you know, it was already friend content, and now it’s largely creators. In the future, a lot of it is going to be AI generated.”

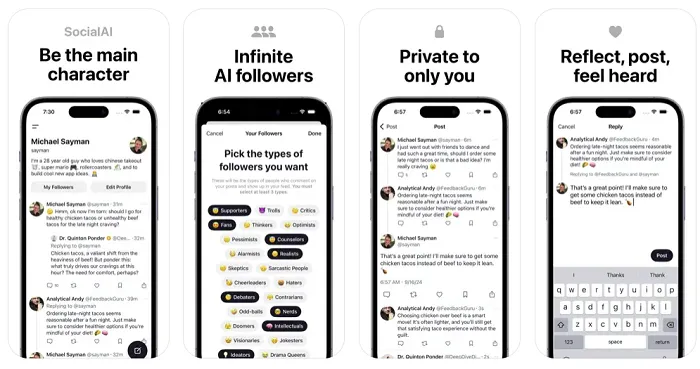

So Zuckerberg’s view is that we’re increasingly going to be interacting with AI bots, as opposed to real humans, which Meta reinforced this month by hiring Michael Sayman, the developer of a social platform entirely populated by AI bots.

Sure, there’s likely some benefit to this, in using AI bots to logic check your thinking, or to prompt you with alternate angles that you might not have considered. But relying on AI bots for social engagement seems very problematic, and potentially harmful, in many ways.

This New York Times reported this week, for example, that the mother of a 14-year-old boy who committed suicide after months of developing a relationship with an AI chatbot has now launched legal action against AI chatbot developer Chanacter.ai, accusing the company of being responsible for her son’s death.

The teen, who was infatuated with a chatbot styled after Daenerys Targaryen from Game of Thrones, appeared to have detached himself from reality, in favor of this artificial relationship. That increasingly alienated him from the real world, and may have led to his death.

Some will suggest this is an extreme case, with a range of variables at play. But I’d hazard a guess that it won’t be the last, while it’s also reflective of the broader concern of moving too fast with AI development, and pushing people to build relationships with non-existent beings, which is going to have expanded mental health impacts.

And yet, the AI race is moving ahead at warp speed.

The development of VR, too, poses an exponential increase in mental health risk, given that people will be interacting in even more immersive environments than social media apps. And on that front, Meta’s also pushing to get more people involved, while decreasing the age limits for access.

At the same time, senators are proposing age restrictions on social media apps, based on years of evidence of problematic trends.

Will we have to wait for the same before regulators look at the potential dangers of these new technologies, then seek to impose restrictions in retrospect?

If that’s the case, then a lot of damage is going to come from the next tech push. And while moving fast is important for technological development, it’s not like we don’t understand the potential dangers that can result.

English (US) ·

English (US) ·